Bug Mentor - Mentoring developers with Q&A during software bug resolution

A new way to get your questions answered during software bug resolution

Every year, software companies lose billions of dollars maintaining and fixing their software. Their developers spend nearly half of their time identifying and resolving issues and improving their existing code. Thus, software bugs or defects are almost inevitable, but they can be managed effectively with appropriate tools and processes. One of the major challenges of bug resolution is capturing enough useful information early on. Once a bug is reported, developers often ask questions to the issue-tracking systems (e.g., Bugzilla, GitHub). Unfortunately, many of their questions go unanswered, leading to delays in software bug resolution or, in the worst case, no resolution at all. Enter BugMentor — a Gen AI-powered tool to streamline the Q&A during bug resolution.

Dr. Masud Rahman and his graduate student, Usmi Mukherjee, at the RAISE Lab at Dalhousie University have been working on this problem for years. Their recent work, Bug Mentor [1], a novel Gen AI-based solution, aims to answer developers’ questions that may arise when resolving software bugs.

How does it work?

Bug Mentor has three major steps in its workflow: (1) constructing a corpus, (2) constructing an appropriate context, and (3) generating answers to developers’ questions using Large Language Models (LLM). In modern Gen AI terminology, this workflow resembles Retrieval-Augmented Generation (RAG), in which relevant information is first retrieved from a knowledge source and then provided to the LLM to generate an informed answer.

According to existing studies [3], ~40% of the issue reports describe the same or similar issues in the issue tracking system. These issue reports can be a great source of useful details that support understanding of the new bug and a possible solution for the unanswered questions. Bug Mentor adopts a practical approach to take advantage of this information.

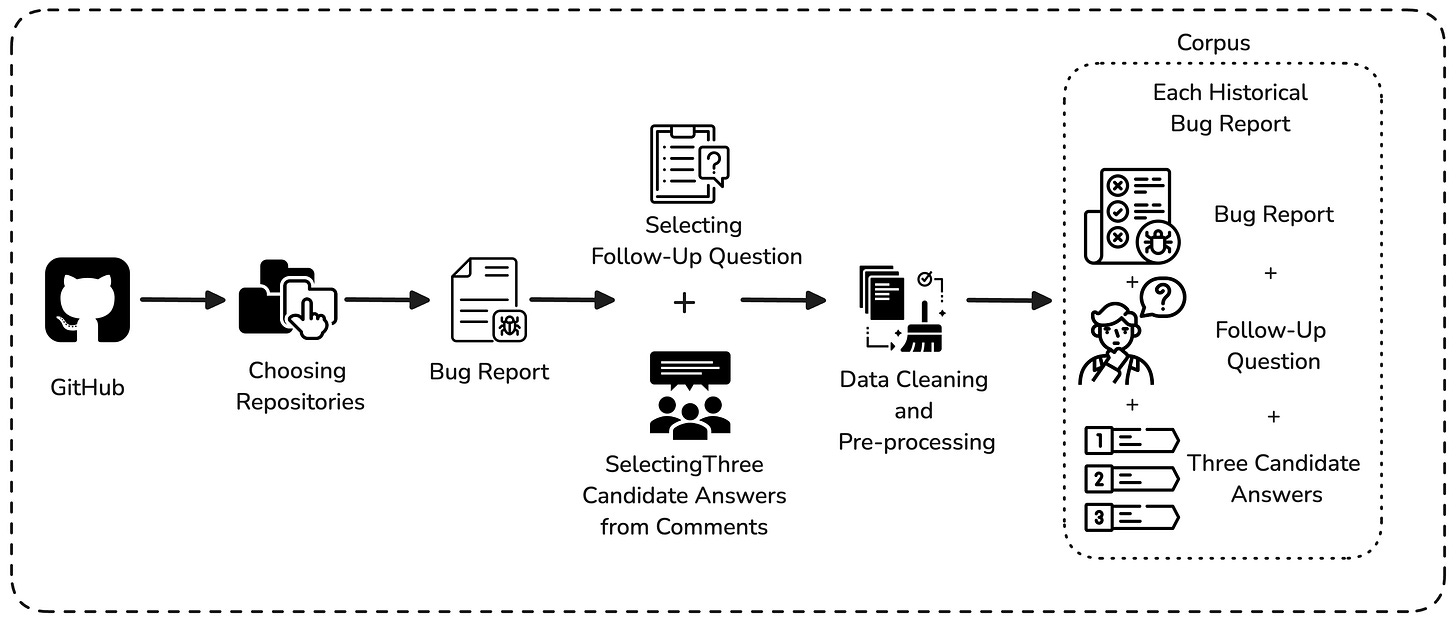

Step 1: Bug Mentor constructs a corpus by collecting the past bug reports, the questions posed against them, and the answers they received. This corpus serves as a historical knowledge base for past bugs and guides what to do next.

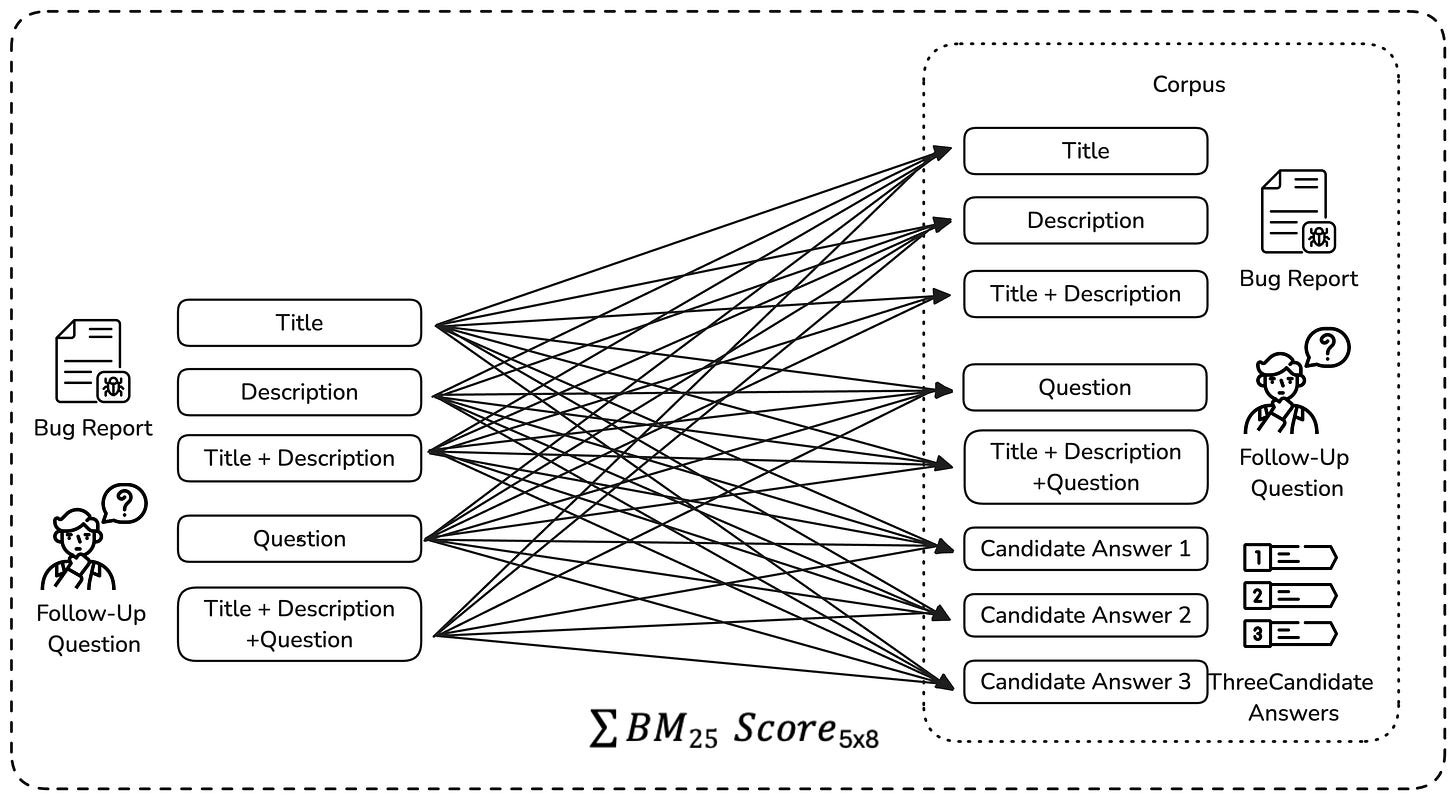

Step 2: Given the size of context windows in modern LLMs (i.e., the limited amount of information a model can process at once), placing a corpus within the context could be computationally very expensive. The context is a valuable resource and should be used carefully — the essence of context engineering [5]. Thus, Bug Mentor selects the most useful details to include in the context window by carefully capturing similar bug reports, their questions and answers from the corpus above. In particular, it divides each bug report into multiple parts (e.g., title, summary, questions) and uses a well-known search algorithm, such as BM25 [4], to pick out the most useful pieces of information from the corpus. This information forms the context window of an LLM interacting with the new bug report.

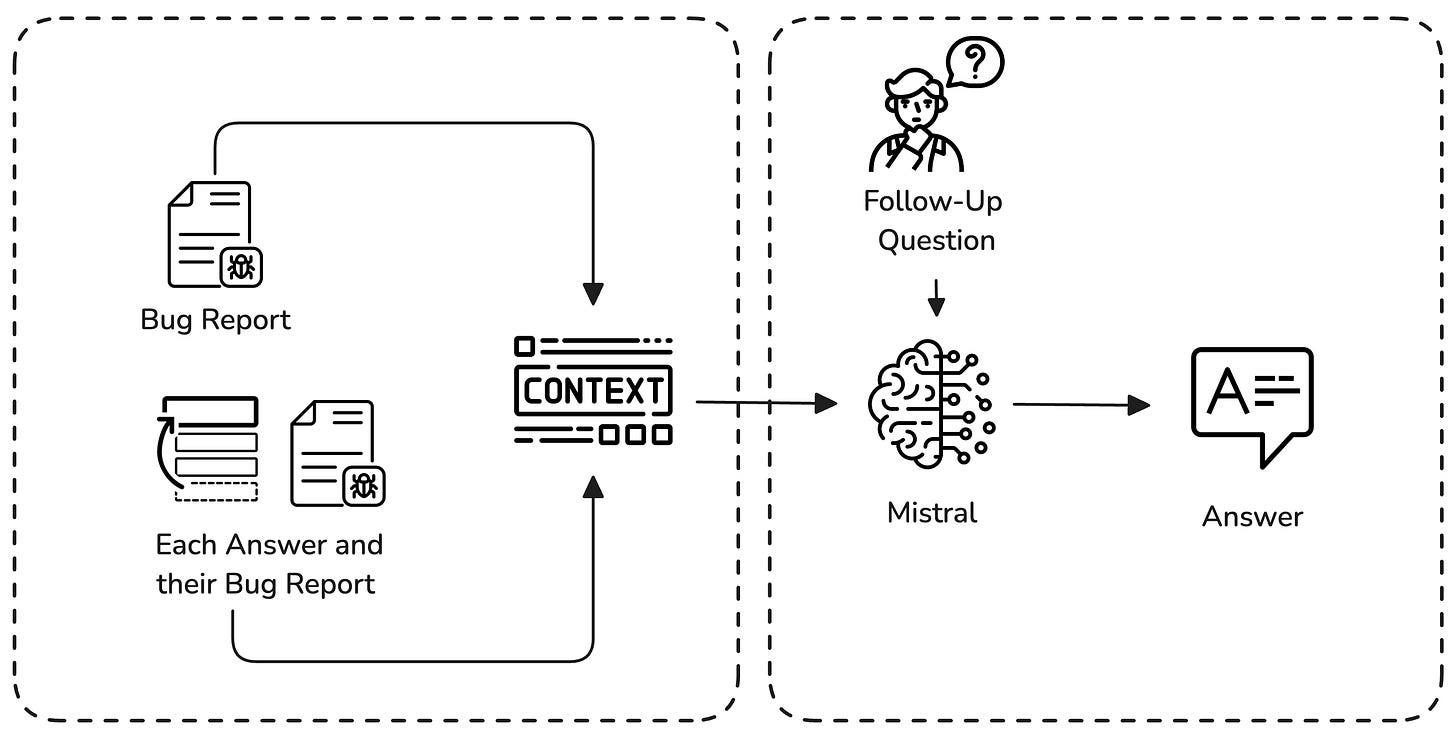

Step 3: With the context prepared, Bug Mentor is now ready for action. When the developer asks a question about the new bug, both the question and context are given to the AI model, and the corresponding answer is captured. Currently, Bug Mentor employs open-weight models such as Mistral and Llama for its Q&A, ensuring that others can repeat the same results, and the process is open and can be inspected. According to the recent experiment [1], Bug Mentor achieves a BLEU score of ~72, a performance metric for checking the quality of generated texts, indicating encouraging results in answering bug-related questions.

Why does It Matter?

According to existing surveys, 32% of questions developers ask to understand the problem go unanswered during issue resolution [2]. Expecting quick answers from people submitting bug reports might not always be practical; they might not know all the answers. In large companies (e.g., Mozilla Firefox), developers wait for two weeks for such answers, and then they close the report if no answer is received by then [6]. This lack of necessary information delays the assignment of bug reports to developers and hurts bug reproducibility efforts, leading to delays in software delivery and poor-quality software updates. Bug Mentor comes to the developers’ rescue in this circumstance. It can serve as the first AI assistant to help fill in missing details through an interactive question-and-answer support.

Where can Bug Mentor help the most?

Bug Mentor can be especially useful for large projects with years of evolution history and a high number of bug reports. In such settings, finding the key information needed to debug the problem can take significant time. It can also benefit organizations where knowledge about how issues are prioritized and assigned is spread across multiple developers, making bug resolution slower and more dependent on individual experience. In open-source or collaborative projects with contributors from around the world, where reporters or contributors may respond slowly or inconsistently, Bug Mentor can help fill in missing important details. It may also be useful for legacy systems, where much of the original debugging knowledge is buried in older issue discussions. For new developers, Bug Mentor can act as a helpful guide by bringing up useful information from past issues and reducing the learning curve during software debugging.

What’s Next?

BugMentor has a long way to go for deployment in real-world software environments. Currently, it is an early-stage prototype, available on GitHub, open for further development and improvement by both researchers and practitioners. For anyone curious to explore, here are a few links:

Read the full paper here

Check out the GitHub codebase: BugMentor

Check out the replication package

References

[1] Usmi Mukherjee and M. Masudur Rahman. BugMentor: Generating Answers to Follow-up Questions from Software Bug Reports using Structured Information Retrieval and Neural Text Generation Journal of Systems and Software (JSS), pp. 31, 2025

[2] Silvia Breu, Rahul Premraj, Jonathan Sillito, and Thomas Zimmermann. Information needs in bug reports: improving cooperation between developers and users. In Proceedings of the 2010 ACM conference on Computer-supported cooperative work, pp. 301–310, 2010

[3] John Anvik. Automating bug report assignment. In Proceedings of the 28th International Conference on Software Engineering (ICSE ‘06), pp. 937–940, 2006

[4] Stephen Robertson & Hugo Zaragoza. The Probabilistic Relevance Framework: BM25 and Beyond. Foundations and Trends in Information Retrieval. 3 (4): 333–389, 2009.

[5] https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

[6] M. Masudur Rahman, Foutse Khomh, and Marco Castelluccio. Why are Some Bugs Non-Reproducible? An Empirical Investigation using Data Fusion. In Proceedings of the 36th International Conference on Software Maintenance and Evolution (ICSME), pp. 12, Adelaide, Australia, 2020.